Research on Micro-blog New Word Recognition Based on SVM

2017-10-10ChaotingXiaoJianhouGanBinWenWeiZhangandXiaochunCao

Chaoting Xiao, Jianhou Gan, Bin Wen, Wei Zhang, and Xiaochun Cao

ResearchonMicro-blogNewWordRecognitionBasedonSVM

Chaoting Xiao, Jianhou Gan*, Bin Wen, Wei Zhang, and Xiaochun Cao

New word discovery possesses a significant role in the field of Natural Language Processing (NLP). As the effect of mutual information on multi-string is not good, we improve the traditional mutual information and adjacency entropy method respectively and put forward enhancement of mutual information and relative adjacency entropy. As multi-feature massive data brings the problem of slow speed, we use the MapReduce parallel computing model to extract some features, such as, enhancement of mutual information, relative adjacency entropy and background document frequency. With the extracted eight features, the feature vectors of the candidate words are formed, and the SVM model can be trained by the labelled corpus. The experiments show that the proposed method accelerates the computing speed and shortens the time required by the whole recognition process. In addition, comparing with the existing methods, we can see that theFvalue reaches 86.98%.

new words recognition; Natural Language Processing(NLP); enhanced mutual information; relative adjacency entropy; mapReduce; SVM

1 Introduction

In the process of Chinese word segmentation, new word recognition is quite difficult. Sproat and others pointed out that 60% errors of Chinese word segmentation are caused by new words[1]. Now, many new words are spreading via micro-blog. New words such as, ‘伐木累’, ‘葛优瘫’ and ‘北京瘫’, etc, have been created. Micro-blog text contains a considerable proportion of new words, the linguists have concluded according to the statistics that the average annual production of new words is more than 800[2]. In the field of new word recognition, there is no definition for ‘new word’. Based on existing research, people think that new words should have the following properties. From the perspective of word itself, it should be an independent word. From the perspective of appearing frequency, the new word should be widely adopted. Even in corpus, the new word has a high frequency of appearance in many documents and is used by numerous people. From the perspective of time, the word has just appeared within a certain period of time, or it has a new meaning which is ‘the new use of old word’[3].

At present, new word recognition methods are mainly divided based on the rule, statistics and combination of both rule and statistics. The method based on rule needs to build a rule bank to match via template. The precision is high but manual rule is difficult to write and the cost is high. In addition, the rule is highly related to the field. Meanwhile, the advantages of this kind of method based on statistics include flexiblility, good adaptability and portability. However, it needs a large corpus to calculate the statistics and thus consumes much time. For example, Sui and others[4]extracted the words with close relationship through computing the static union rate among the words after word segmentation of corpus. Then they used the grammar rule and field features to get the field terms with high confidence. The rule only has the features of field, so it is not suitable for other corpora. Sornlertlamvanich and others used decision-tree model to train the new word recognition model, with a precision result of 85%[5]. Unfortunately, it is not suitable for large-scale corpus. Peng[6]and others adopted the statistical method to do unified consideration to do segmentation and new word discovery, by using the CRF model of combining lexical features and field knowledge to extract the new words. At the same time, they added the discovered new words into the dictionary to enhance the recognition effect of the model. The method improves the accuracy of word segmentation but costs a long time. Liu[7]and others applied the left & right information entropy and likelihood rate (LLR) to determine the word boundary to extract the candidate new words. The extracted features of the method are less and the precision rate is not high. Lin and others[8]counted and extracted new words based on word’s internal model and combined with mutual information, IWP and position-word probability. The proposed mutual information is not suitable for multi-strings and there are limitations. Li and other scholars[9]employed word frequency, word probability, etc., to train a SVM model and consider the new recognition from the perspective of classification. The limitation of the method is that it cannot recognize the low-frequency new words, thus it will produce a lot of garbage strings. Zhao and others[10]iteratively used the mutual information, left (right) entropy, left (right) adjacency right (left) average entropy, etc., to obtain the candidate list of new words. Then they used a Chinese collocation library to filter the list to get new words. The limitation of the method is that when obtaining the mutual information, the multi-strings will be divided into two substrings in the calculation. This will affect the results of recognizing the new words. Wang and others[11]explored the new words from the internet based on time series information and used the combination between dynamic feature’s new method and commonly employed statistical method. The method compares the curve’s change trend of each candidate word within a period of time, and states that each part of the new word should have the identical change trend. However, the accuracy of the method is not very high. Shuai and others[12]proposed a filter method to stop word with redefinition and the filter method by using iterative context entropy algorithm to recognize new words and introduce lexical features. The rule dependency of this method is strong. Su and others[13]proposed to improve the adjacency entropy with a weighted adjacency entropy to optimize and improve the performance, and achieved good performance. Li and others[14]exploited the internal word probability, mutual information, word frequency and word probability rule as features to train the SVM model, but the precision rate was only 61.78%.

Due to the problems of speed caused by the above methods when processing large-scale corpus, as well as the low precision rate caused by the statistical method when recognizing new words, this paper analyzes the micro-blog corpus. We firstly reduces the noise in the micro-blog corpus. Then we use N-Gram statistical method to extract new word candidates based on a word segmentation. We propose a new filtering algorithm and combine it with the stop word list launched by Harbin Institute of Technology to filter the candidates. Then, a SVM classifier will be trained by multiple eigenvalues obtained through improving enhancement of mutual information, relative adjacency entropy and background document frequency method. At last, with the trained SVM model for recognizing the new words of micro-blog of test set. The method improves the speed of new word training recognition model caused by the multi-featured massive data of large-scale corpus statistics, meanwhile, the proposed method can also improve the precision rate of new word recognition. The detailed process is as shown in Fig.1.

2 New Word Discovery Method Based on Micro-blog Content

2.1Preprocessingofthecorpus

Micro-blog has a strong randomness in the word use and grammar, and causes a large number of noisy data. It will affect the feature extraction and increase the model training time. According to the features of the micro-blog corpus, this paper reduces the negative influence of noisy data in the process of training. Through statistics and analysis, we find that many contents are accompanied with topic and expression labels, etc. The specific labels are shown in Table 1.

Fig.1 Overall flow chart of micro-blog new words extraction.

LabelDescription#words#Hottopic,‘words’meanthekeywordsofthetopic@nameRemindauser,‘name’meanstheus⁃ernametobereminded【sentence】Micro⁃blogtheme,‘sentence’isthesummaryortitleofthemainbodyofmicro⁃blog[word]Expressionword,‘word’meanthemindandemotion,etc,oftheauthororpaper

Based on the analysis of micro-blog corpus, we can find that, ‘@’, [expression] and URL links exist in most of the micro-blog content. ‘@’ is usually followed by a user name, where many usernames are random, so there is zero possibility to appear a new word. [Expression] and URL link labels will also have a zero possibility of new words. These noisy data have great influences on the generation of candidate words, so it is necessary to eliminate these noises. This paper eliminates the above three kinds of labels through the method of building regular expression of the micro-blog data.

2.2 Filtering algorithm

We introduce N-gram algorithm for preprocessed micro-blog corpus data, the basic idea of the method is to carry out N-size sliding window operation to text content. Based on the word segmentation of the micro-blog data, we use the N-gram to recognize the candidate words. If the corpus is ‘中文新词识别’, the result after word segmentation will be ‘中文新/新词/词识别’. WhenNis 2, the candidate word will be ‘中文新/新词/词识别’. WhenNis 3, the candidate word will be ‘中文新词/新词识别’. We extract all the candidate new words from micro-blog corpus under the conditions ofNis 2, 3 and 4. These candidate new words contain many garbage strings, so they need to be filtered. After analyzing the news corpus provided by Fudan University, we conclude that the content of corpus are news before 2002, where the language was formal and without oral language and the new words from the internet were rare. Therefore, this paper proposes a filtering algorithm of combining news corpus and stop words. The pseudo algorithm is shown in Table 2, whereNis the news corpus,Wstands for the candidate new word set of micro-blog,Tis the stop word list andNLmeans the candidate new word set after filtering.

3 Feature Selection of Candidate New Words

After the filtering algorithm, the candidate words still have some noises, such as: ‘富美喜’, ‘女票不’ and ‘逼格真’. Therefore, we use the statistical method to quantify the features of these candidate new words. The employed statistical method includes mutual information[10]which can measure internal coagulation. Information entropy[10]and background document frequency can measure the external freedom degree.

Table 2 Filtering algorithm.

3.1 Internal coagulation

Mutual information[10]measures the correlation between two events. Traditional mutual information formula[10]only gives the calculation formula of two character strings, which can only be applied to the two character new words. For the multi-character string, if the micro-blog candidate new wordsS={s1s2…sn}, the common method nowadays is to take the two longest substringsSleft={s1s2…sn-1} andSright={s2s3…sn}. A high correlation between the two longest substrings of a candidate new word S shows that the combination of the two will be more closely, and S is more likely to be a word. On the contrary, a lower correlation between them indicates that they are less dependent on each other. However, the mutual information value obtained under this condition is not very accurate. Under current circumstances, this paper aims to improve the traditional mutual information formula[10]and proposes enhancement mutual information which is suitable for the multi-word strings, the definition is as follows.

(1)

whereWis the total words of micro-blog corpus andPis the frequency of string in the corpus. The greater the value of the enhanced mutual information, the higher the possibility of the current string is a new word.

3.2 External freedom degree

Information entropy can reflect the average information content brought by an event’s results. Intuitively, the meaningful new words are not only repeated in the text, but also appeared in different context, which reflects the string’s independent ability and the freedom degree of usage. However, more appearances of a string in the corpus, it is more probable to have a larger value. Therefore, the adjacency entropy is not conducive to the low-frequency strings. This paper proposes a relative adjacency entropy. In our opinion, the string with a higher word probability than its substring will be regarded as a new word. For stringW= {w1w2…wn} and its longest substringWleft= {w1w2…wn-1} andWright= {w2w3…wn}. We subtract the adjacency entropy after taking the weight and the substring’s adjacency entropy, and take the minimum of relative adjacency entropy.

Respectively, we defineα={α1,α2,…,αn} andβ={β1,β2,…,βn} to be the context sets of the candidate repeated stringsωin the corpusX. The entropy ofωin the left, right and context of the corpusXis defined as follows.

(2)

(3)

The adjacency entropy after taking the weight and relative adjacency entropy is defined as follows.

Cr(ω)=λCr(ω)-(1-λ)Cr(ωleft)

(4)

CL(ω)=λCL(ω)-(1-λ)CL(ωright)

(5)

The minimum formula of relative adjacency entropy is defined as:

C(ω)=min{CL(ω),Cr(ω)}

(6)

From the above definitions we can conclude that for the character strings that are only used in the fixed context, the relative adjacency entropy is small. On the contrary, the relative adjacency entropy is big for character strings that are used in many different contexts.

3.3 Background document frequency

Considering from the perspective of human memory, we think that new words have never appeared in previous memories. We use a large-scale background corpus to simulate the human memory to compare the frequency of string in the background corpus and the string in the corpus of the extracted string (foreground corpus). If the frequency of the string in foreground corpus is much larger than that in the large-scale background corpus, the string is likely to be a new word. This method is also useful for high-frequency word adhesion, i.e., the repeated strings and garbage filtering, such as: ‘也不’ and ‘了一’, etc. The frequencies of these strings in the foreground corpus and the background corpus are similar. The formula of the relevant frequency ratio of stringωinXandYof two corpora is defined as follows.

(7)

wheref(ω,X) andf(ω,Y) are the corresponding frequencies ofωinXandYof corpora,Xis the foreground corpus andYis the background corpus.

3.4 Dice

The Dice of candidateWis estimated by Equation 8. In Equation 8,xidenotes the characters in candidatew. For example,w=x1,x2, …,xn.

(8)

3.5 SCP

The SCP of candidatewis estimated by Equation 9. In Equation 9,xidenotes the characters in candidatew.

(9)

4 Parallel Implementation of New Word Feature Quantization Algorithm

4.1MapReduceparallelcomputingmodel

MapReduce is a programming model, which is divided into Map and Reduce two stages. The input and output of each stage is based on key-value pairs. In Map stage, Map function changes each line of input to the key-value pairs (K1,V1) form. After the processing of the Map function, it outputs many new key-value pairs List (K2,V2). In Reduce stage, all output in Map stage will be divided according to the key (K2, List(V2)). This process is called shuffle. Each group (K2, List (V2)) is the input of the Reduce function. After the processing of Reduce function, it outputs the final results (K3,V3). Overall new word recognition speed will be affected due to the large scale of the micro-blog corpus and the large amount of time to perform the new word feature quantization algorithm. Thus we parallel the calculations of the background document frequency, the relative adjacency entropy and the enhanced mutual information algorithm with the MapReduce model.

4.2Parallelimplementationofbackgrounddocumentfrequencyalgorithm

Background document frequency mainly refers to the ratio between the candidate wordsw’s frequencies inXof the foreground corpus and inYof the background corpus. In order to improve the coupling efficiency of the multi-features, we calculate the word frequencies inXandYcorpus respectively and calculate the frequency ratio in the multi-features coupling Reduce. The calculation process of the background document frequency of the candidate word inXcorpus is as follows. First, we segmentXcorpus via Split and transfer each segmentation to map function. Then we input the candidate word setWto the Map function via configuration method. In Map function, we calculate the frequencykof each candidate word in the corpus fragments, and use output key as the candidate wordw. The specific pseudo code of the algorithm is shown in Table 3, where it outputs Value as frequencyk. In Reduce function, the same key values are accumulated to get the key value, which is the frequency of the candidate wordwinXcorpus. Then it is divided byXcorpus size to obtain the frequency of the candidate word which is the output. The specific algorithm is shown in Table 4. The overall system diagram is shown in Fig.2.

Table3Mapoperationonparallelizationofbackgrounddocumentfrequency.

Inwhich,theimplementationprocessofstep(3)is:1.InputW[],〈k1,v1〉2.Forifrom0tolengthofW3.While(index!=⁃1)4.Index<⁃find(W[i],v1);//Findthepositionofcandidatewordw1inV1,ifthereis,returnposi⁃tion,ifthereisno,return⁃15.Ifindex!=-1then6.k++;7.Endif8.Index++;9.Endwhile10.Output〈W[i],k〉11.Endfor

Table4Reduceoperationonparallelizationofbackgrounddocumentfrequency.

Theimplementationprocessofstep(4)is:1.Input〈W[i],List〈k〉〉2.Forxfrom0tolengthofList〈k〉3.sum<⁃sum+List〈k〉.x4.Endfor5.tf=sum/size//ThefrequencyofcandidatewordW[i]incorpusisdividedbythesizetocorpustogetthefrequency6.Output〈W[i],sum〉

Fig.2 Parallelization of string frequency

4.3Parallelizationofrelativeadjacencyentropy

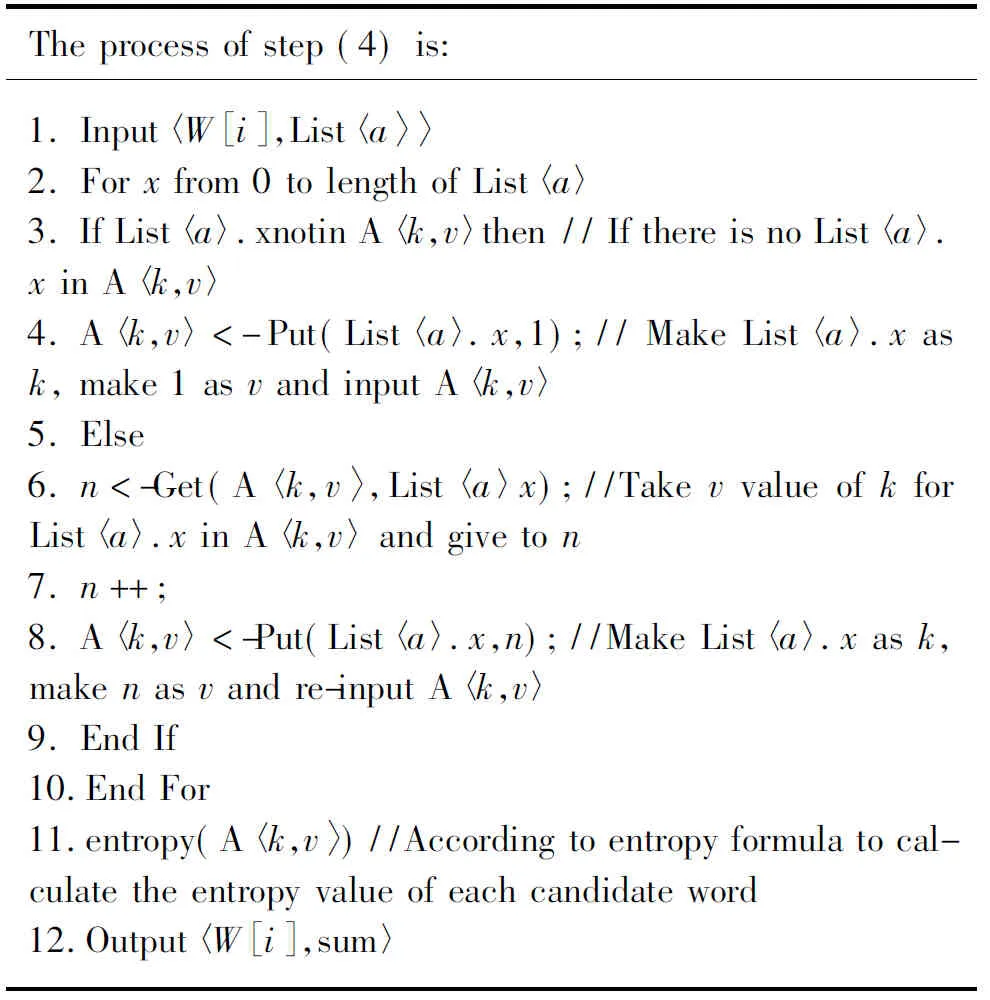

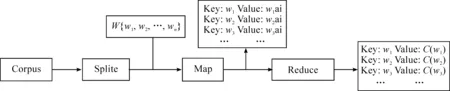

In order to obtain relative adjacency entropy, the context entropy should be obtained first. The context entropy can be classified as the left and right entropy respectively, where their algorithm is similar. Here we introduce the algorithm processed by taking the left entropy as an example. After theXcorpus is segmented via split, each segmentation is inputted to Map. We employ the Configuration method to input the candidate word setWto Map function. Then we find the candidate wordw’s adjacent left character in Map. We setupwfor Key. Value is setup to be the adjacent left characteraas the output. The specific algorithm is shown in Table 5. In Reduce, we count the number of the same left character of candidate word, then obtain the left entropy value of the candidate word and output the left entropy value of each candidate word. The specific algorithm implementation is shown in Table 6. The overall process is shown in Fig.3.

Table5Mapoperationonparallelizationofrelativeadjacencyentropy

Inwhich,theprocessofstep(3)is:1.InputW[],

4.4Multi-featuredatacouplingandSVM

After obtaining the feature data of the candidate new word (such as: the enhanced mutual information, the relative adjacency entropy and the background document frequency), we need to couple the multiple feature data which can form feature vector. First, the data is from different files such as the relative adjacency entropy, the enhanced mutual information and the background document frequency. Each mapper already knows the file name of data stream processed by it. Here it is the key wordwand is marked by the file name. After sealing each input function, map will implement division, shuffle and sort operation indicated by Mapreduce. The Reduce function receives the input data and carries out the complete cross product to the value. The Reduce function generates all consolidated results of these values and limits each value to be marked at most once in each consolidation. The overall process is shown in Fig.4.

Table6Reduceoperationonparallelizationofrelativeadjacencyentropy.

Theprocessofstep(4)is:1.Input〈W[i],List〈a〉〉2.Forxfrom0tolengthofList〈a〉3.IfList〈a〉.xnotinA〈k,v〉then//IfthereisnoList〈a〉.xinA〈k,v〉4.A〈k,v〉<⁃Put(List〈a〉.x,1);//MakeList〈a〉.xask,make1asvandinputA〈k,v〉5.Else6.n<⁃Get(A〈k,v〉,List〈a〉x);//TakevvalueofkforList〈a〉.xinA〈k,v〉andgiveton7.n++;8.A〈k,v〉<⁃Put(List〈a〉.x,n);//MakeList〈a〉.xask,makenasvandre⁃inputA〈k,v〉9.EndIf10.EndFor11.entropy(A〈k,v〉)//Accordingtoentropyfor⁃mulatocalculatetheentropyvalueofeachcandi⁃dateword12.Output〈W[i],sum〉

Compared to other classifiers, SVM has better classification results and is a popular statistical machine learning method. We map the sample points from low-dimension to high-dimension feature space and find a super plane, such that the distance between each class of data and hyper plane is the maximum (namely, the optimal hyper plane). The final results will be different if the kernel function is different. For the feature vector composed of the multi-feature data of the candidate new word obtained through the above methods, this paper employs 70% of the multi-feature data as the training set and 30% of the multi-feature data as the testing set. Labeled in a manual manner, we train the SVM micro-blog new word recognition model and carry out 10-fold cross-validation.

Fig.3 Parallelization of Relative Adjacency Entropy

Fig.4 Overall parallelization process

5 Experimental Results and Analysis

In the experiment, we employ a 5.2 million micro-blog corpus, which includes 591 micro-blog of 2009, 60795 micro-blog of 2010, 763027 micro-blog of 2011, 1699484 micro-blog of 2012, 17882335 micro-blog of 2013, 681449 micro-blog of 2014 and 198925 micro-blog of 2015. We make the micro-blog from 2009 to 2013 as the background corpus to simulate human memory. We let the micro-blog of 2014 and 2015 be the foreground corpus. The news corpus which is used by the filtering algorithm is from Fudan University, which includes 9804 articles. The results of the candidate new word will be obtained by the filtering algorithm of combining the news corpus with stop word. The preliminary results have a total of 13273 words. In relative adjacency entropy algorithm, we set the weight to be 0.62. Through the experiment we find that the proposed method can avoid the new word to be deleted accidentally via the reduction of weight of substring. The hardware of the parallelization improvement is one set of Lenovo computer, with CPU of Celerondual-core T30001.80Ghz and 16G memory. The distributed environment is simulated through running VirtualBox virtual machine on the computer. In total, there are six computers with memory of 512M that are virtual, and we installed the CentOS operation system for each computer. The experimental platform is Eclipse and developed by java language. Kernel function of SVM uses the RBF kernel function.

5.1 Methods and standards

This method employs the precision rate (P), recall rate (R) andF-measure to measure the experimental results. The specific definitions of the measurements are as follows.

(10)

(11)

(12)

In formula (10),Rmeans the recall rate of the recognized new word. In formula (11),Pmeans the precision rate of the recognized new word. In formula (12),β=1 while theFvalue is the harmonic mean of the precision rate and the recall rate. These measurements can comprehensively reflect the overall performance of the new word recognition.

5.2 Experimental results and analysis

Comparing the advantages and disadvantages between the proposed method and previous methods, the specific experimental results are shown in Table 7. In Table 7, BF represents the Background document frequency.MI*represents the enhanced mutual information.E*means the relative adjacency entropy. F represents the frequency. We can conclude from the experiment that both the precision rate and recall rate of the enhanced mutual information are improved when comparing (MI+F+Dice+SCP+LCE+RCE) with (MI*+F+Dice+SCP+LCE+RCE). Similarly, both the precision rate and recall rate of (MI*+F+Dice+SCP+LCE+RCE) are higher than that of (MI*+F+Dice+SCP+LCE+RCE+E*). Via the comparison between (MI*+F+Dice+SCP+LCE+RCE+E*) and (MI*+F+Dice+SCP+LCE+RCE+E*+BF), we can observe that the new word recognition accuracy without the BF is lower. According to the results, the enhanced mutual information and the relative adjacency entropy have positive effects. The combination of some features improves the precision rate, recall rate and F value of micro-blog new word recognition when comparing with traditional method (MI+F+Dice+SCP+LCE+RCE). The finalFvalue is 86.98%. Experimental results of new word recognition are shown in Table 8 and experimental results of non-new word recognition are shown in Table 9.

Table 7 Experimental results of micro-blog new word recognition. %

Table 8 Experimental results of new word recognition.

Table 9 Experimental results of non-new word recognition.

The parallelization algorithm sets the processing speed under the node of one, three and six sets. We obtain the operation situation of micro-blog corpora with different sizes to count the time spent by the system when recognizing the new words. The results are shown in Fig.5. Experimental results indicate that when we increase the number of the node machines, and the recognition speed of the micro-blog new word also increases.

Fig.5Operationspeedchartonmicro-blognewwordrecognition.

6 Conclusion

We improve the traditional mutual information and adjacency entropy method respectively and put forward the enhancement of mutual information and relative adjacency entropy. After experimental verification, the parallelization shortens the overall time of new word recognition. The precision rate and recall rate of micro-blog new word recognition are improved by a trained SVM classification model with the features generated from the above methods. The proposed method can achieve very good classification and recognition performance. In the future, we will try to fully explore the effective new word detection features and adopt them into the model to further improve the performance of the micro-blog new word recognition task.

Acknowledgment

The research is supported by National Key Research and Development Plan (No. 2016YFB0800603), National Natural Science Foundation of China (No. 61562093, 61422213, 61650202), Key Project of Applied Basic Research Program of Yunnan Province No.2016FA024, Key Program of the Chinese Academy of Sciences (No. QYZDB-SSW- JSC003).

[1]R.Sproat and T.Emerson, The first international Chinese word segmentation bake off, inProceedingsofthesecondSIGHANworkshoponChineselanguageprocessing, Sapporo, Japan, vol.17,pp.133-143,2003.

[2]Zhang Dexin.‘There will be no fish if the water is too clear ’-My Normative View on New Words,JournalofPekingUniversity:PhilosophyandSocialScience, vol.37, no.5, pp.106-119, 2000.

[3]X.Huang and R.F.Li, Discovery Method of New Words in Blog Contents,ModernElectronicsTechnique, vol.36,no.2, pp.144-146, 2013.

[4]Z.F.Sui, Y.R.Chen, and Y.R.Wu, etal.The Research on the Automatic Term Extraction in the Domain of Information Science and Technology.http://icl.pku.edu.cn/icl_tr/ papers_2000-2003 /2002/E026-szf-The Research on the Automatic Term Extraction in the Domain of Information Science and Technology.pdf

[5]V.Sornlertlamvanich, T.Potipiti, and T.Charoenporn, Automatic Corpus-Based Thai Word Extraction with the C4.5 Learning Algorithm,inProceedingsofInternationalConferenceonComputationalLinguistics, Germany,vol.2, pp.802-807, 2000.

[6]F.Peng, F.Feng, and A.McCallum.Chinese segmentation and new word detection using conditional random fields, inProceedingsofthe20thinternationalconferenceonComputationalLinguistics, Switzerland,pp.562,2004.

[7]T.Liu, B.Q.Liu, Z.M.Xu, and X.L.Wang.Automatic domain-specific term extraction and its application in text classification,ActaElectronicaSinica, vol.35, no.2, pp.328, 2007.

[8]Z.F.Lin and X.F.Jiang. New Word Recognition Based on Internal Model of Word,ComputerandModernization, no.11,pp.56-58, 2010.

[9]H.Li, C.N.Huang, J.Gao, and X Fan, The Use of SVM for Chinese New Word Identification, inProceedingsofFirstInternationalJointConferenceonNaturalLanguageProcessing, Sanya,China, pp.723-732,2004.

[10] X.B.Zhao and H.P.Zhang, New Word Recognition Based on Iterative Algorithm,ComputerEngineering, vol.40, no.7, pp.154-158, 2014.

[11] M.Wang, L.Lin, and F.Wang.New word identification in social network text based on time series information, inProceedingsofIEEEInternationalConferenceonComputerSupportedCooperativeWorkingDesign, IEEE Press, pp.552-557,2014.

[12] C.Xiao, J.Gan,and B.Wen, et al.New Word Recognition Based on Micro-blog Contents,PatternRecognitionandArtificialIntelligence, vol.27,no.2, pp.141-145, 2014.

[13] Q L Su and B Q Liu.Chinese new word extraction from MicroBlog data, inProceedingsofInternationalConferenceonMachineLearningandCybernetics, Tianjin, China: IEEE Press,pp.1874-1879,2013.

[14] C Li and Y Xu.Based on Support Vector and Word Features New Word Discovery Research,TrustworthyComputingandServices.Berlin, Germany: Springer Press, pp.698-701, 2012.

JianhouGanreceived Ph.D. degree in Metallurgical Physical Chemistry from Kunming University of Science and Technology, China, in 2016. In 1998, he was a faculty member at Yunnan Normal University, China. Currently, he is professor in Yunnan Normal University, China. He has published over 40 refereed journal and conference papers.His research interest covers education informalization for nationalities, semantic Web, database, intelligent information processing.

BinWenreceived Ph.D. degree in computer application technology from China University of Mining & Technology, Beijing, China, in 2013. In 2005,he was a faculty member at Yunnan Normal University, China.Currently, he is associate professor in Yunnan Normal University,China. Now, his research interest covers intelligent information processing and emergency management.WeiZhangis now an Assistant Professor in Institute of Information Engineering, Chinese Academy of Sciences, Beijing 100093, China. He received his Ph.D degree from Department of Computer Science in City University of Hong Kong, Hong Kong, China, in 2015. Before joining Chinese Academy of Sciences, he was a visiting scholar in DVMM group of Columbia University, New York, NY, USA, in 2014. His research interests include large-scale visual instance search and mining, multimedia and digital forensic analysis. He has won the second place in TRECVID Instance Search task in 2012, the Best Demo Award in ACM-HK openday 2013.

XiaochunCaoreceived the B.E. and M.E. degrees in computer science from Beihang University, Beijing, China, and the Ph.D. degree in computer science from the University of Central Florida, Orlando, FL, USA. He has been a Professor with the Institute of Information Engineering, Chinese Academy of Sciences, Beijing, China, since 2012. He spent about three years with ObjectVideo Inc., as a Research Scientist. From 2008 to 2012, he was a Professor with Tianjin University, Tianjin, China. He has authored and co-authored over 120 journal and conference papers. He is a fellow of the IET. He is on the Editorial Board of the IEEE Transactions of Image Processing. His dissertation was nominated for the University of Central Floridas university-level Outstanding Dissertation Award. In 2004 and 2010, he was a recipient of the Piero Zamperoni Best Student Paper Award at the International Conference on Pattern Recognition.

2016-12-20; accepted:2017-01-20

M.S. degree in computer application technology from Yunnan Normal University, China, in 2017. Now, His research interest covers Pattern recognition and natural language processing.

•Chaoting Xiao and Bin Wen are with School of Information Science and Technology, Yunnan Normal University, Kunming, Yunnan, China. E-mail: xiaochaoting@gmail.com; wenbin@ynnu.edu.cn.

•Jianhou Gan is with Key Laboratory of Educational Informatization for Nationalities(Yunnan Normal University),Ministry of Education, Kunming,Yunnan, China. E-mail: ganjh@ynnu.edu.cn.

•Chaoting Xiao, Wei Zhang and Xiaochun Cao are with Institute of Information Engineering,Chinese Academy of Sciences, Beijing, China. E-mail: wzhang.cu @ gmail. com, caoxiaochun@iie.ac.cn.

*To whom correspondence should be addressed. Manuscript